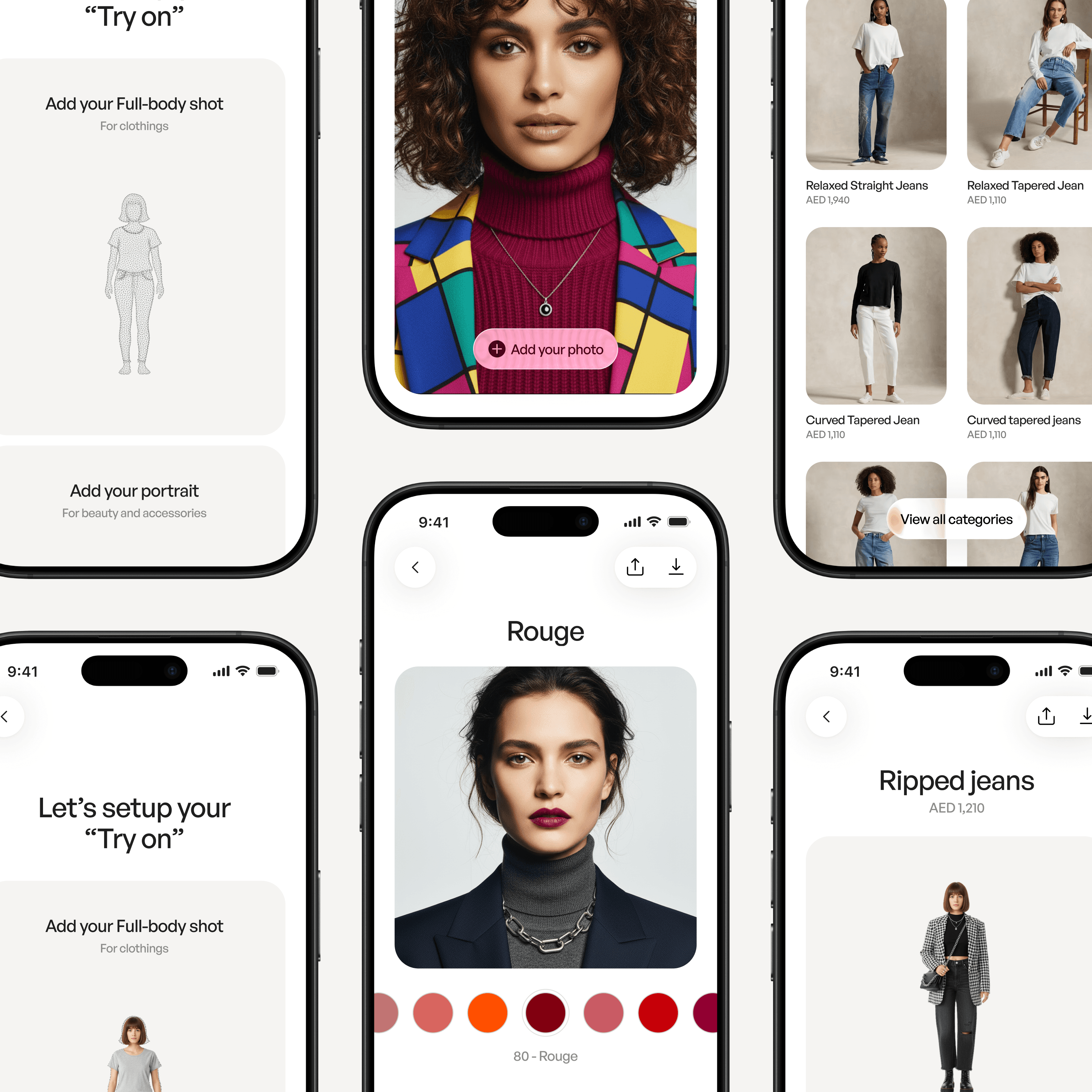

Using Generative Models to Improve Try-On Experiences

Feb 28, 2026

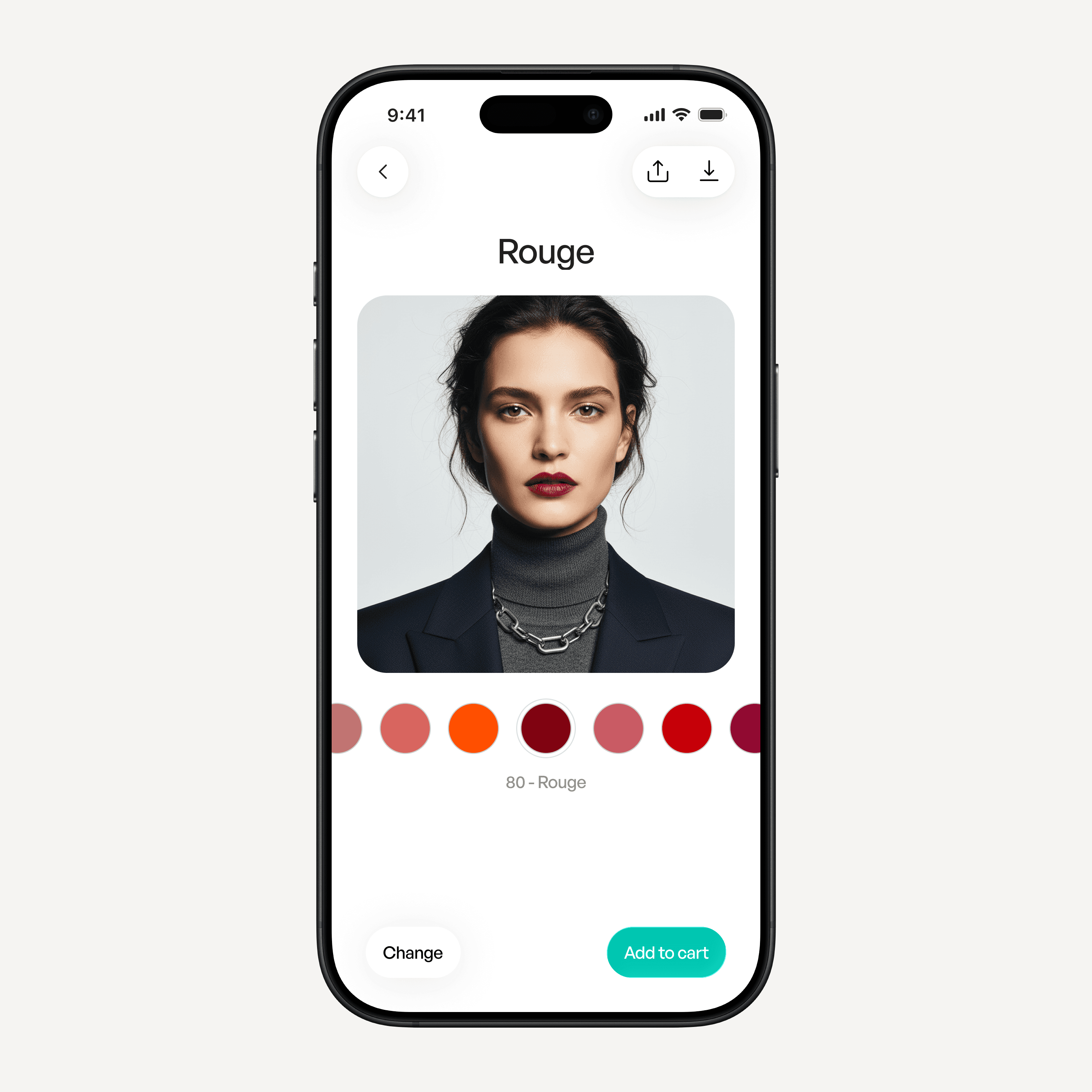

Over the past few months, I’ve been studying how generative models are starting to affect very practical user experiences. One area that stands out is digital try-on.

Online shopping has always carried a structural limitation. When we buy clothing online, we are making a decision without direct physical feedback. We rely on model photos, size charts, written descriptions, and occasionally customer reviews. All of these are indirect proxies for something physical.

The result is uncertainty.

Earlier attempts tried to solve this with augmented reality and 3D overlays. A camera would detect your body or face and place a digital garment or accessory on top. From a technical standpoint, this was impressive. But from an experiential standpoint, it often felt slightly disconnected from reality. Fabric did not deform naturally. Lighting did not fully integrate. The garment appeared placed rather than worn.

These systems reduced friction slightly, but they did not eliminate doubt.

What is changing now is not the intention, but the method.

From Overlay to Regeneration

Generative models allow a different approach. Instead of attaching a 3D object to a live feed, the system can generate a new image in which the garment is integrated into the scene itself.

This distinction is subtle but important.

In an overlay system, the product is added.

In a generative system, the image is reconstructed.

When reconstruction happens, several variables can adjust simultaneously:

The folds of the fabric respond to posture.

The lighting interacts with material properties.

The fit changes when size parameters change.

Color adapts to skin tone and ambient light.

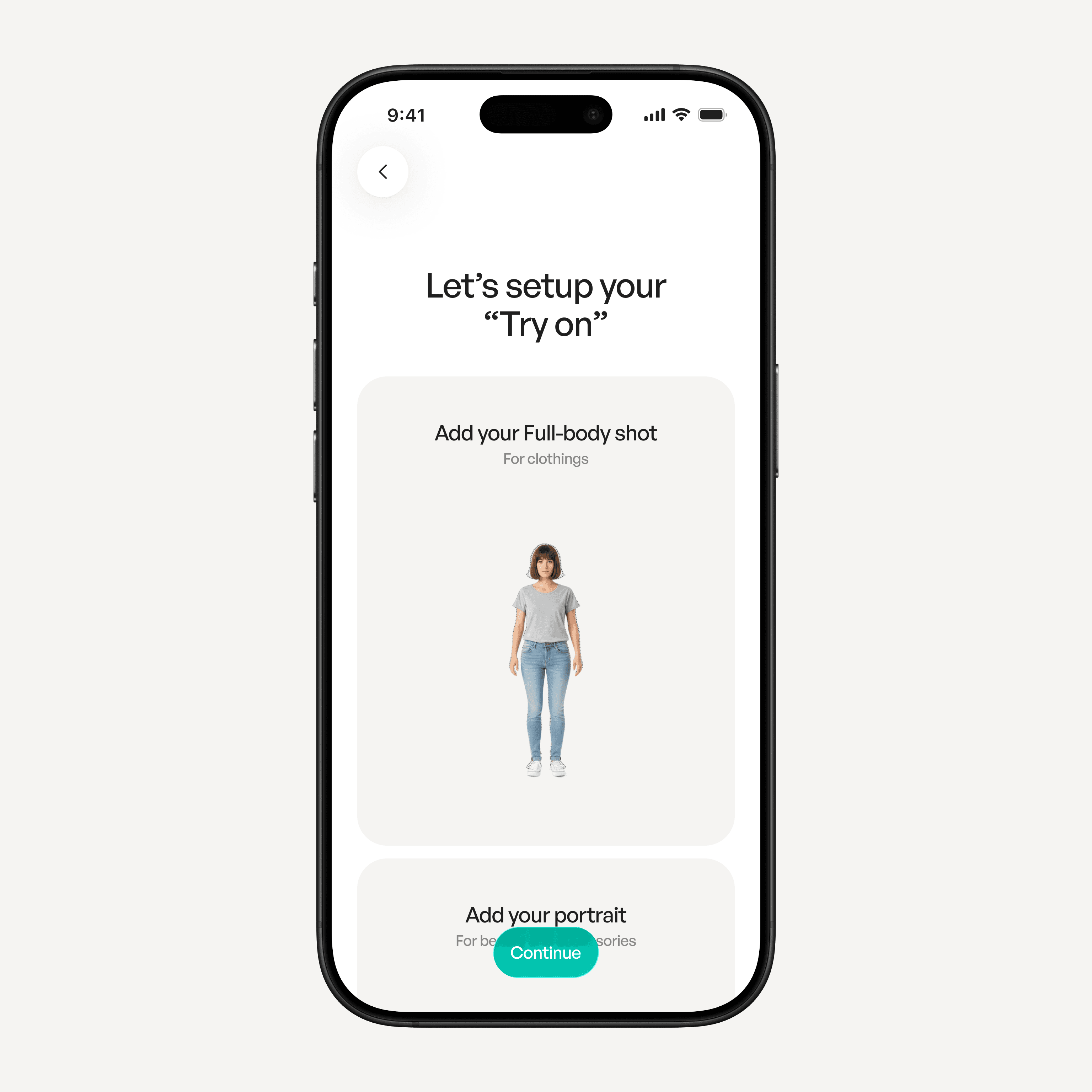

This creates a different type of feedback loop for the user. The output feels less like a technical demonstration and more like a visual scenario.

The user is not watching an object being placed.

They are observing a version of themselves.

That difference affects trust.

Existing Implementations and Their Limits

It is worth acknowledging that this direction is already being explored. Google, for example, has introduced AI-based try-on features for clothing.

Technically, the results are strong. The garments look realistic. The generation quality is improving quickly.

However, the limitation is not the model capability. It is the experience architecture.

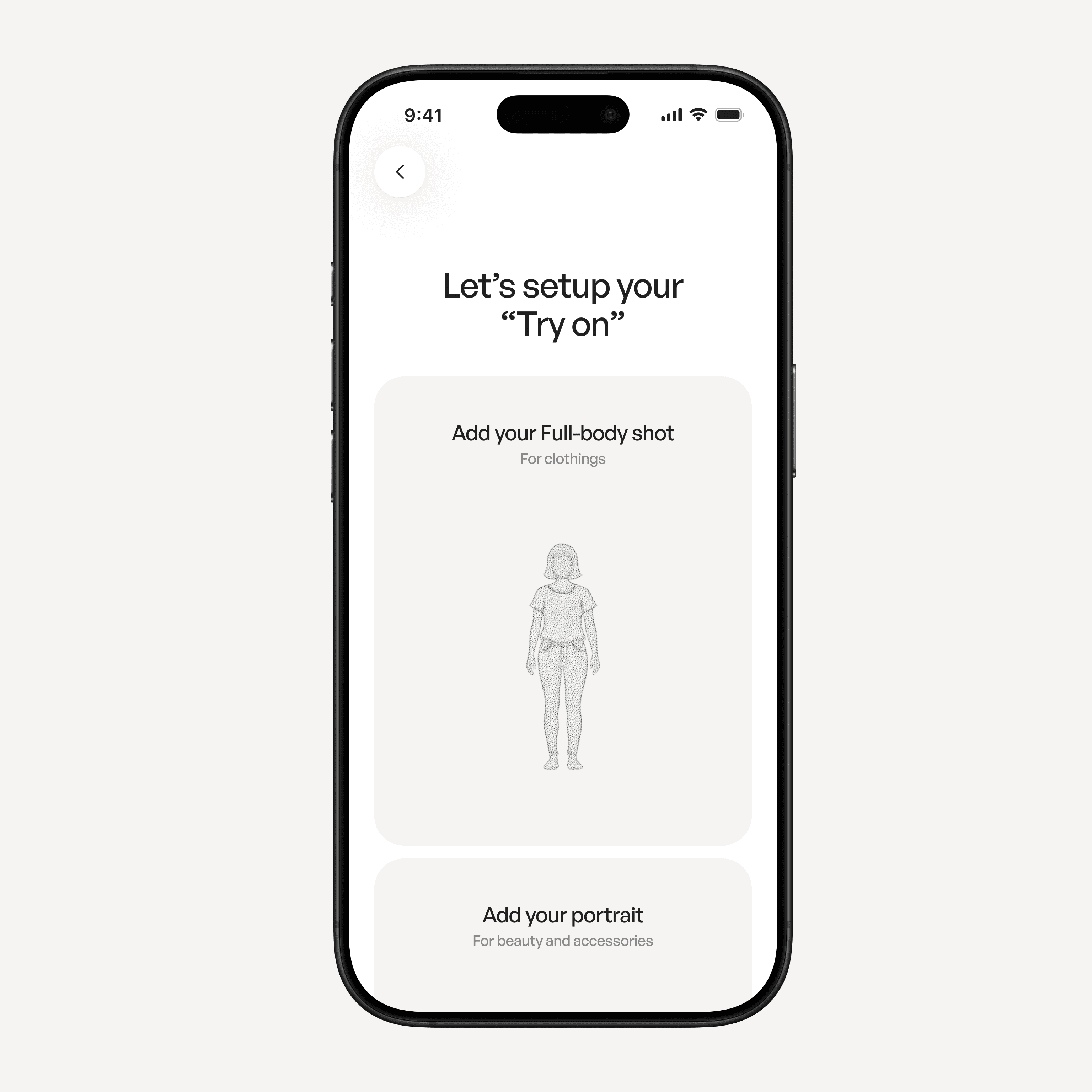

Most implementations treat try-on as a feature attached to a single product page. You upload a photo. The system generates a result. Then the interaction ends. The data does not persist in a meaningful way. There is no long-term profile that understands your proportions, your measurements, or your previous interactions.

As a result, each interaction feels isolated.

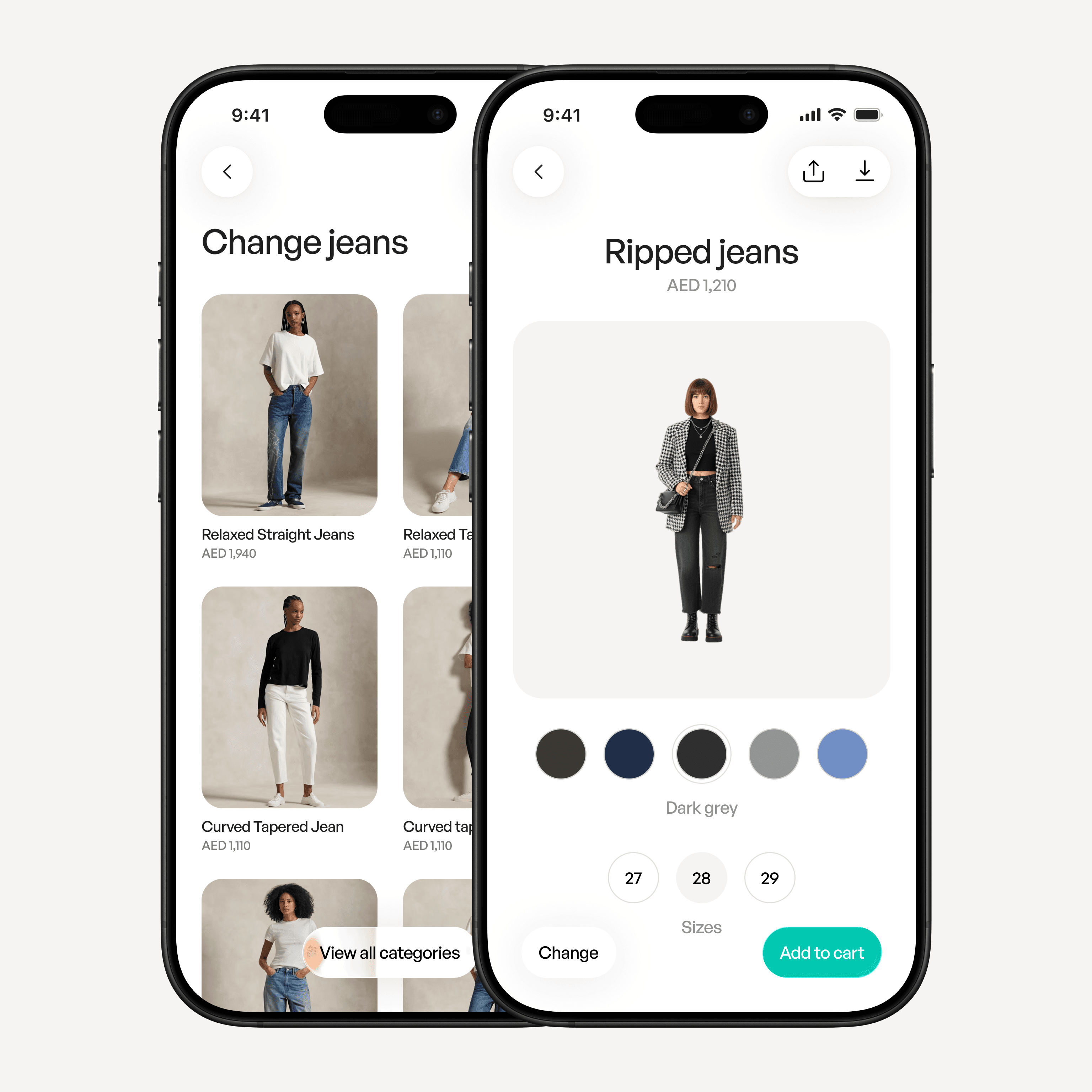

If generative try-on is going to mature into a stable interface pattern, it likely requires a persistent user layer. Something closer to a visual identity profile. A structured model of your body proportions, sizing preferences, and perhaps even posture variations.

Once that profile exists, the try-on experience stops being a repeated upload process and becomes a continuous system. The user carries their visual profile across brands and platforms. The generative layer then operates on top of that baseline.

This is less a model problem and more an experience design problem.

The Role of Uncertainty in Purchase Decisions

From a psychological perspective, most hesitation in online fashion stems from predictive uncertainty.

The buyer is not evaluating fabric in isolation. They are evaluating a projection of themselves wearing it. When that projection is weak, the risk feels high. Returns increase. Decision time increases.

Generative simulation narrows that predictive gap. It does not eliminate uncertainty completely, but it makes the imagined outcome more concrete.

There is an important cognitive shift here. Previously, the user had to construct a mental simulation. Now the system performs that simulation visually. This reduces abstraction and reduces the cognitive effort required to imagine the outcome.

The decision becomes less speculative.

That has implications not only for fashion, but for any domain where the final result is visual:

Makeup shades

Eyewear proportions

Furniture placement

Interior material changes

In all of these cases, the user is trying to answer the same question: “How will this look in my context?”

Generative models are starting to provide a structured answer.

Why This Direction Deserves Careful Design

It would be easy to treat this as a novelty feature. But I think it deserves more careful thought.

If the experience is fragmented, users will treat it as a gimmick.

If it is persistent and integrated, it becomes part of decision-making infrastructure.

That difference depends on interface continuity, data portability, and trust.

The models are improving rapidly. The remaining challenge is designing the system around them in a way that feels stable and intentional.